Estimated reading time: 13 minutes

Key takeaways

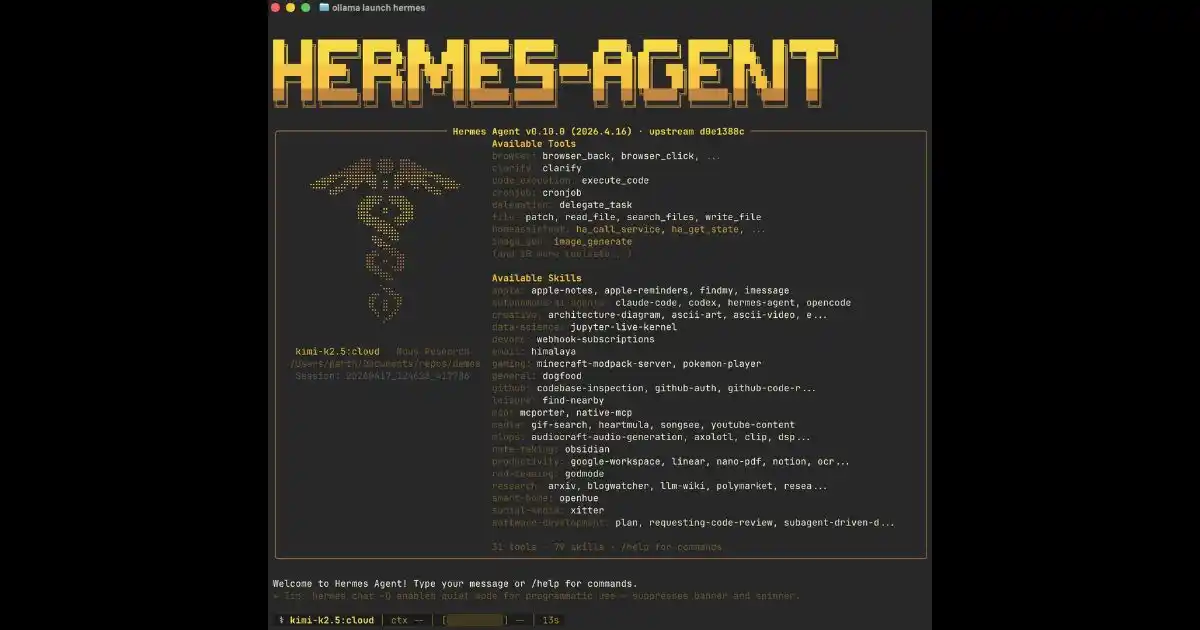

- Hermes Agent is a fully open-source autonomous AI agent (MIT license) built by Nous Research, launched February 25, 2026 — with a real learning loop that auto-generates skill documents after every complex task

- Its multi-layer memory system (Honcho dialectic modeling + FTS5 search) understands how you work — not just what you ask

- In production, failure mode #1 is missing a dedicated persistent Docker volume for /skills and /memory — accumulated skills are wiped on every container restart

- Hermes beats OpenClaw on autonomous error recovery (22% better) and long-horizon tasks; OpenClaw still leads on native multi-agent orchestration

- Hermes Agent’s competitive edge is measured in weeks of accumulated run time — the earlier you deploy, the richer your agent’s skill base becomes

Table of Contents

Hermes Agent — What It Actually Is and Why It’s Different

Hermes AI Agent is an open-source autonomous AI agent built by Nous Research, publicly launched on February 25, 2026. This is the same team behind the Hermes LLM model family — they understand inference from the inside. And it shows in the architectural choices.

Most people get this wrong: they call anything that chains two API calls with an LLM an « AI agent. » That’s not automation — that’s a liability. A real autonomous agent does three things chatbot wrappers don’t:

- It acts on multi-step tasks — without human intervention at every stage

- It remembers across sessions — no reset, real persistent context

- It improves its own working methods — that’s where everything changes

Hermes Agent ships under a full MIT license. You self-host it, your data stays on your infrastructure, Nous Research controls nothing about your deployment. In 2026, with compliance requirements tightening, that matters.

Key point: Hermes Agent is not a chatbot with memory. It’s an agent with a persistent learning loop — it extracts its own skills from every task it executes and reuses them automatically. The difference is measured in weeks of run time, not version numbers.

The Hermes Learning Loop — How It Actually Gets Smarter

This is the section most Hermes Agent articles rush through. They mention « self-learning » as a marketing feature. Let me be specific about what’s actually happening under the hood.

The Hermes loop follows an exact sequence: Execute → Analyze → Extract Skill → Refine. After every complex task, the agent reviews its own work, identifies patterns that worked, and generates a Markdown file — a skill document — inside the /skills directory. That file contains human-readable instructions plus real code examples.

The next time a similar task comes in, Hermes loads that skill doc — not the full prompt. Concrete result: fewer tokens consumed, higher precision, fewer errors. Internal benchmarks from Nous Research (April 2026) show 40% faster execution on complex tasks after skill accumulation.

Hermes organizes its memory into three distinct layers:

| Memory Type | What It Stores | Duration | Used When |

|---|---|---|---|

| Short-term | Current session context | Active session | Every interaction |

| Episodic | Past experiences, decisions made | Persistent | Similar tasks detected |

| Procedural | Skill documents, validated workflows | Persistent | Recurring complex tasks |

Jared H. Garr’s note: Here’s what actually happens in production: after every complex task, Hermes automatically generates a

.mdfile inside/skills. Next time a similar task comes in, it loads that doc — not the full prompt. Fewer tokens, better precision. That’s what you want for the long haul.

Memory System — What Other Agents Don’t Do

Talking about « persistent memory » in 2026 is table stakes. The real question is: what concrete architecture actually holds up in production across several weeks?

Hermes uses Honcho for dialectic user modeling. This isn’t just « remembering your preferences. » It builds an evolving representation of how you work — your decision patterns, your recurring behaviors, your implicit priorities. The agent doesn’t ask about your preferences: it infers them and refines them session after session.

Search is handled by FTS5 session search — full-text search across all past sessions with on-the-fly LLM summarization. In practice, the agent can surface any technical decision made three weeks ago, contextualize it, and use it as the foundation for the current task.

I’ve seen this in production in our stack at Rebirth Distribution. After three weeks of running, Hermes started proactively suggesting weekly reviews — without anyone asking. It had simply learned that was our working pattern. This isn’t theory. That’s what the heartbeat system does: periodic checks that update memories without manual triggers.

- Honcho dialectic modeling — evolving user profile built from real behavior patterns

- Agent-curated memory — Hermes decides what to retain, not you

- FTS5 full-text search — retrieve any past session in natural language

- Heartbeat system — proactive memory updates without manual triggers

Hermes Agent vs OpenClaw — The Real 2026 Comparison

80% of articles about Hermes AI Agent compare it to OpenClaw. Most of them do it wrong. Here’s the comparison that actually matters when you’re deciding what to deploy in production.

The real cost is: if you pick the wrong tool, you pay for it in incidents, debugging time, and dependency on whoever knows how to restart the thing at 2am.

| Criteria | Hermes Agent | OpenClaw |

|---|---|---|

| Memory system | Multi-layer persistent (Honcho + FTS5) | SOUL-based, less granular |

| Error recovery | Autonomous — 22% better (Long-Horizon Task benchmark) | Manual reset required on SOUL logic break |

| Self-learning | Yes — auto-generated skill documents | No — static behavior |

| Multi-agent | Roadmap H2 2026 | Native today |

| Compatible models | 400+ via Nous Portal + local backends | OpenAI-centric + some providers |

| License | MIT — true open source | Open source |

| Best for | Long-term solo agent, recurring tasks | Complex multi-agent orchestration |

Let me be specific: the 22% better error recovery on Long-Horizon Tasks is not a minor detail. In production, an automation task that fails and requires manual intervention means someone waking up to restart it. On critical workflows, OpenClaw’s SOUL logic break is a real operational risk.

On the flip side, if your need today is native multi-agent orchestration — multiple specialized agents coordinating in real time — OpenClaw is ahead. Hermes is entering that territory in H2 2026.

« Hermes takes the lead on long-running and recurring tasks. OpenClaw remains the benchmark for multi-agent orchestration in 2026. » — cristiantala.com analysis, April 2026

Compatible Models — Hermes Agent Doesn’t Depend on OpenAI

LLM API vendor lock-in is the new AWS lock-in of the 2010s. Most people get this wrong by picking an agent tightly coupled to a single provider.

Hermes Agent supports 400+ models via the Nous Portal, and works natively with every local backend: Ollama, vLLM, llama.cpp, and any OpenAI-compatible endpoint. In practice, you can switch from GPT-4o to Llama 3 without touching your agent logic.

| Backend | Use Case | Estimated Cost | Setup Complexity |

|---|---|---|---|

| Ollama (local) | Dev, testing, sensitive data | Near-zero (local compute) | Low |

| vLLM (VPS) | High-performance production | Server cost only | Medium |

| Nous Portal | Managed production, 400+ models | Usage-based | Low |

| OpenAI / Anthropic | Mission-critical production | High at volume | Low |

For testing or sensitive data workloads: Ollama locally, zero API calls, zero cost. For long-term production with high volume: vLLM on a dedicated VPS — predictable cost, controlled performance. To get started without infrastructure complexity: Nous Portal with a model from the Hermes series.

Deploying Hermes Agent in Production — What Breaks and How to Fix It

Every Hermes Agent review shows you the demo. None of them tell you what breaks after two weeks in production. I will.

Warning: The demo worked. Production didn’t. Here’s why: if you deploy Hermes without a dedicated persistent Docker volume for the

/skillsand/memorydirectories, every generated skill document is wiped on the next container restart. That’s the first thing that breaks — and it’s documented nowhere.

Here’s the minimal production-ready Docker configuration:

version: '3.8'

services:

hermes-agent:

image: nousresearch/hermes-agent:latest

restart: unless-stopped

environment:

- MODEL_BACKEND=ollama

- OLLAMA_BASE_URL=http://ollama:11434

volumes:

- hermes_skills:/app/skills

- hermes_memory:/app/memory

- hermes_logs:/app/logs

depends_on:

- ollama

ollama:

image: ollama/ollama:latest

restart: unless-stopped

volumes:

- ollama_models:/root/.ollama

volumes:

hermes_skills:

hermes_memory:

hermes_logs:

ollama_models:Other failure modes to anticipate:

- FTS5 index rebuild after crash — If the container is killed during a database write, the SQLite FTS5 index rebuild can take several minutes. On a 2-vCPU VPS, plan for a health check with a generous timeout (60s minimum).

- Skill doc corruption — If the process is interrupted mid-generation, the Markdown file can be partial. Fix: atomic write via temp file + rename, or a volume with journaling enabled.

- No native Windows support — WSL2 required as of May 2026. On Windows Server environments, provision a WSL2 layer or switch to a Linux VPS. Native Windows support is on the roadmap but not yet available.

- Memory bloat over time — After several weeks, episodic memory can grow significantly. Implement a weekly pruning script on sessions not accessed in over 30 days.

For those who don’t want to manage infrastructure: Tencent Cloud Lighthouse has offered a one-click Hermes Agent deployment since April 14, 2026. It’s a valid starting point, but you lose control over persistent volumes. For long-term deployments, a dedicated Linux VPS remains the better approach.

Hermes Agent in 2026 — Where the Project Stands and What’s Coming

66,000+ GitHub stars, 208+ contributors, 3,200+ commits in under three months. That pace is comparable to the early days of projects that structurally reshaped the DevOps landscape.

What’s coming on the Nous Research roadmap:

- Multi-agent orchestration — announced for H2 2026. Hermes will enter direct competition with CrewAI, but with the differentiating advantage of shared persistent memory across agents.

- Native Windows support — actively in development, no confirmed release date.

- Reinforced model-agnostic optimization — better support for Llama 3.x and Mistral for 100% local deployments.

- Skill marketplace — community sharing of skill documents — still speculative but referenced in open GitHub issues.

Most people get this wrong: they wait for the tool to be « mature » before adopting. With a learning loop agent, waiting means losing weeks of skill accumulation. Hermes Agent’s competitive edge doesn’t come from the version number — it comes from run time. An agent that’s been running on your stack for 8 weeks is fundamentally more useful than one installed yesterday, regardless of version.

According to cristiantala.com’s comparative analysis (April 2026), Hermes Agent is positioned to dominate the « personal multi-platform agent » segment in 2026–2027, primarily due to its lead in contextual persistent memory.

Frequently Asked Questions

Is Hermes Agent truly open source?

Yes — full MIT license, no commercial restrictions. You can self-host it, modify it, and integrate it into a commercial product. Nous Research doesn’t control your data, your deployment, or your use. This is a genuine open-source license — not « open core » with the real features locked behind a paywall.

What’s the difference between Hermes Agent and a regular chatbot?

A chatbot responds. Hermes acts — and learns. The fundamental difference: a chatbot processes each message in isolation, forgets everything between sessions, and never does anything on its own. Hermes executes multi-step tasks without supervision, maintains persistent context across all sessions, and improves its own working methods through the learning loop. That’s not automation — it’s a full autonomous agent architecture.

Does Hermes Agent work with local models like Ollama?

Yes, natively. Ollama, vLLM, llama.cpp — all supported without complex configuration. You can deploy Hermes Agent 100% locally with zero external API calls. This is particularly relevant for sensitive data or environments without outbound internet access. The Nous Portal remains the simplest option to access 400+ models without managing inference infrastructure.

How does Hermes Agent create its own skills?

After every complex task, Hermes automatically generates a Markdown file inside the /skills directory. That file contains structured instructions plus real code examples extracted from the execution. The next time a similar task arrives, Hermes loads that skill document — not the full prompt — which reduces token consumption and improves accuracy. Skills are refined with each subsequent similar execution.

Does Hermes Agent run on Windows?

Not natively as of May 2026. WSL2 is required on Windows. For a serious production deployment, a Linux VPS (Ubuntu 22.04 LTS or Debian 12) is strongly recommended — more stable, fewer abstraction layers, cleaner Docker volume management. Native Windows support is on the Nous Research roadmap but has no confirmed release date.

Building on Hermes Agent: The Advantage of Starting Now

Hermes AI Agent rests on three pillars that hold up in production: a real learning loop with automatic skill document extraction, a multi-layer memory system that understands how you work, and true architectural freedom through 400+ model compatibility and a full MIT license.

The question isn’t « is Hermes Agent mature enough. » The question is: how many weeks of accumulated skills do you want to have ahead of people who are still waiting for the next release.

This isn’t theory — deploy Hermes AI Agent today, and six weeks from now you’ll have an agent that knows your stack better than any tool you’ve used before.